Q&A with Professor Tim Cheng on designing better AI computer chips

Professor Tim Cheng, Vice President for Research and Development at the Hong Kong University of Science and Technology, leads the AI Chip Center for Emerging Smart Systems (ACCESS), founded in 2020.

ACCESS brings together a group of specialists to fuel the global expansion of artificial intelligence (AI) by building novel chips that improve performance and energy efficiency. Croucher caught up with Cheng to learn more about how ACCESS is revolutionising AI chip design.

What ways is artificial intelligence already used in our everyday lives?

Anything generated by a computer is artificial, but there are differences in the levels of intelligence, which we have seen enormous growth in recently. AI is used everywhere including in speech recognition, self-driving cars, facial recognition and smart sensors, as well as in healthcare setting like MRI or CT scanners, which use some degree of intelligence.

What holds AI back from wider adoption?

Accuracy is a major issue: if you want to replace human assistance, AI must reach a certain level of accuracy, especially for safety-critical or data protection applications.

Improving accuracy however is not straightforward. There are three key components driving AI development. Applications, such as those given above, are the top layer. Supporting these are the algorithms: the software that run various tasks such as machine learning, computer vision processing, or natural language processing.

All this runs on hardware: computing power. Datasets have been growing in a very explosive way and to cope up with that the AI community has developed much more advanced, much more sophisticated and complex algorithms. As a result, the accuracy has been increasing, but this requires an enormous amount of extra computing resources and energy consumption.

It used to be that to train an AI system, the computing resources you would need were doubling every year, pretty much following the so-called ‘Moore’s Law’. But since around 2012, the amount of computing resources you need to train them doubles every 3.4 months, which means the resources required increases by a factor of ten every year.

So we need to grow the accuracy further to enable new applications, but we will not be able to afford it, because of the required additional computing resources.

How is ACCESS working to overcome this problem?

We need to design new hardware – new computing chips – which can better match the unique computational characteristics of each particular algorithm. There are many companies that already design specialised chips, but this can take years and hundreds of designers working on such chips.

What we are doing at ACCESS is contributing to cutting the time and labour needed to design these chips. One way is by considering the fundamental architecture employed by computing chips – they used to be very computation-centric, but since algorithms require the storage and processing of huge amounts of data, newer chips are more memory-centric.

But the main way is by creating new design methodologies and tools. The main principle for doing this is by considering co-optimisation. I mentioned earlier the three layers of AI – applications, algorithms, and hardware – and by designing each with the others in mind we can optimise the process.

For example, when you want to solve a problem, there are often multiple way of doing it. But if you know what your limitations are in the hardware, you can adapt your algorithm to better match it, and vice versa.

We co-design AI chips to consider all layers, meaning we use the bottom-up approach of customisation, and the top-down approach of adaptation, which can be iterated for greater optimisation.

What progress has already been made?

The Internet of Things describes objects that have their own sensors plus the processing ability and software to connect and exchange data with other devices. Traditionally the data is sent to larger processors to make sense of it, but AIoT (i.e., AI plus IoT) devices have embedded intelligence, to process and analyse the data on-site.

These devices therefore need to be ultra-lightweight and ultra-low-power while having high accuracy and potentially dealing with real-time data processing – a perfect use-case for our design principles.

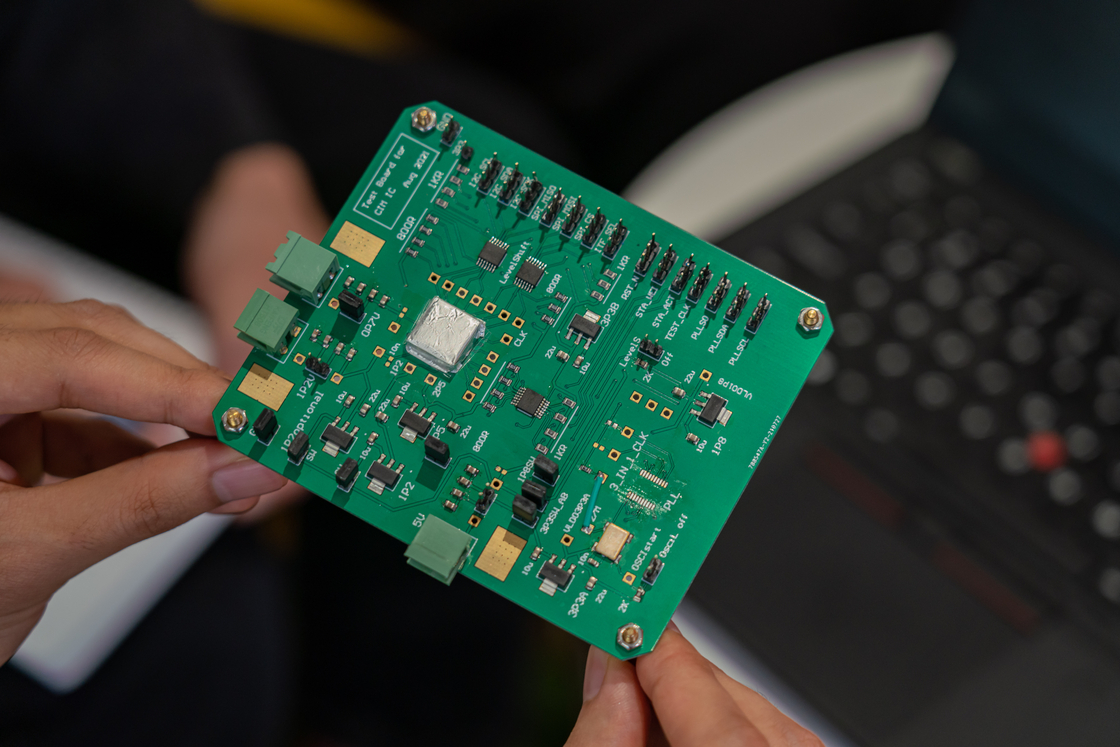

We applied our co-design approach to an image sensor that needed to capture video data and analyse it in real time to count people going into and out of a space, such as a lift or a meeting room.

People counting is not difficult for AI today, but usually you need to send the image from the sensor to a server on your computer or the cloud for analysis, and then send the result back, which has been a bottleneck. But if the camera and the embedded intelligence are only doing one thing – if it is only interested in counting people, and not any other aspect of the video capture – then the processing can be done on the camera. This can run with just a button battery supporting the system throughout the entire lifecycle of the intelligent camera.

With this principle, we can design chips that, for example, take just text, image, audio or video data, or so-called time series data like your heart electrocardiogram (ECG), and process just those things optimally.

We can therefore design such customised chips semi-automatically, and we have already had great interest from companies wanting to customise their hardware by working with us to design specialised AI chips.

How is ACCESS different from other AI development centres?

This is a multinational and multidisciplinary centre for advancing chip design and design automation technologies. We are collaborating with universities including Stanford in the USA, and we’re close to signing agreements for research partnerships with top-notch European universities.

But we are particularly unique in that we cut across the three domains of application, algorithm, and hardware. Many companies or academic centres are highly specialised in each domain, but our approach means we are able to do things otherwise not possible.

There is a saying that the devil is in the details, but for us the devil is in the interfaces between these layers. By focusing there, we are driving a revolution in AI.

For this reason, we also focus on talent development. There is a tremendous shortage and demand for talents in combined AI and chip design. ACCESS is a place to nurture these talents. We have a world-leading group of more than 30 faculty members working with almost 100 PhD students, plus 30~60 full-time researchers and engineers we are going to hire.

This combination is attractive for people with advanced degrees who want to learn, contribute, and develop a successful career. We believe with our critical mass, if we attract top-notch people, it will be a great success.

We also really want to make an impact so that the results will be able to transfer to industry or be made commercially available in the form of start-ups or spin-offs moving forward. This should allow the AI revolution to be accessible to all, not just huge companies like Google, Tesla or Apple who have the means to make AI chips entirely for their own uses.